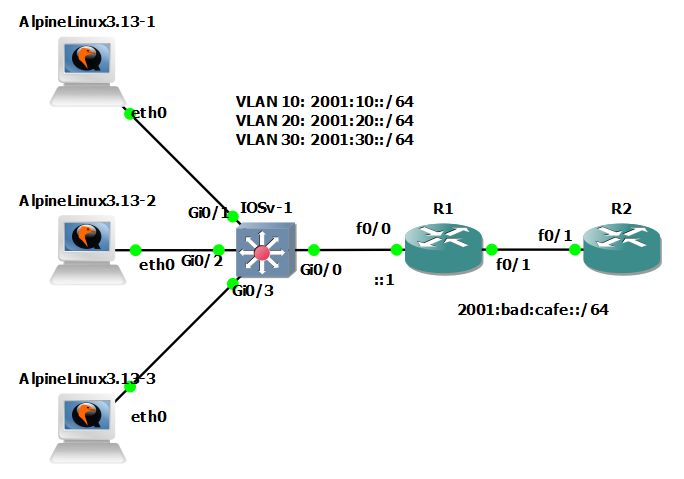

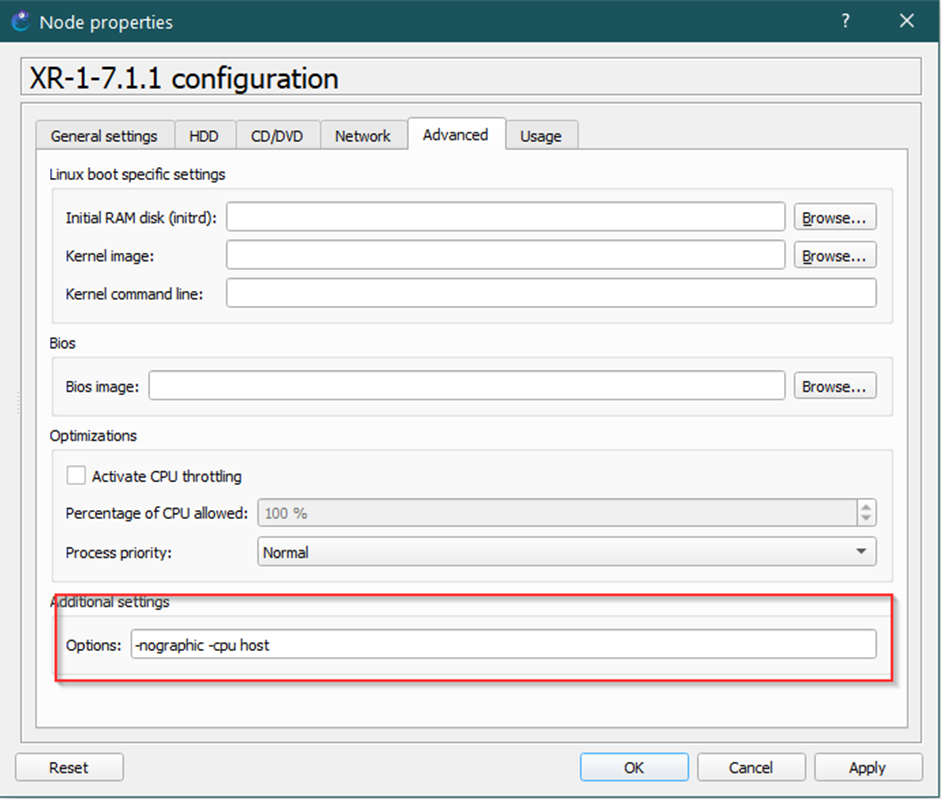

The UNetLab is a tool which integrates several toolkits (dynamips, qemu, IOL) into the one solution were we are able to run several network systems (routers, switches, sensors, PCs …). The list of supported imeages is here: http://www.unetlab.com/documentation/supported-images/index.html.

Actually, Unetlab may be installed and run on several platforms (the list is here: http://www.unetlab.com/documentation/index.html#toc0 ). However, actually I’m doing some experiments with LXC and I’m able to run UNL within a LXC environment too. This article describe the way how to do it.

Prerequisities and initial state

I’m using following software versions and I’m in following intial state:

- a host machine with installed Linux Mint 18.0 Cinammon 64bit

- installed LXC container and a little knowledge about it.

- Network configuration is using bridged connectivity over a physical NIC card. This is the requirement of LXC containers, which will not use a NATed adapterd (lxcbr0), but an open LAN connectivity with directly accessible IP addres (private or public). My container therefore will use bridge0 adapter bridged over eth0 NIC.

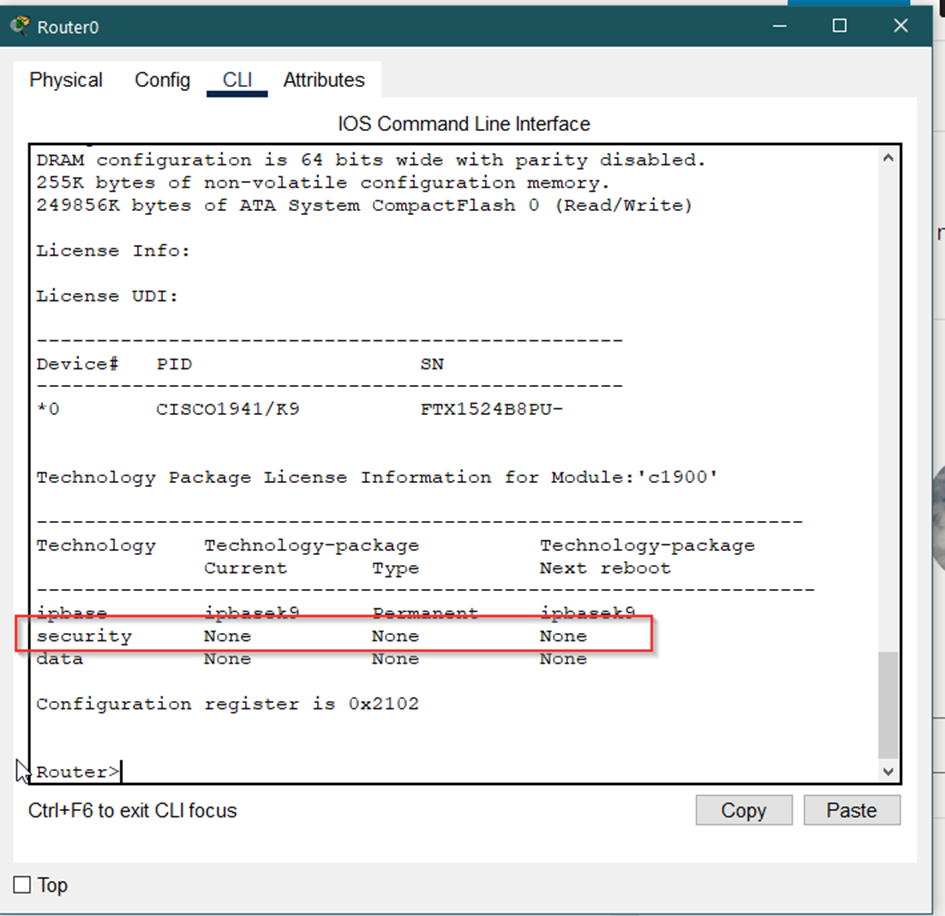

- Cisco/JunOS images, as they are not included

- internet connectivity

Preparing the LXC container

LXC, as wiki says, is:

LXC (Linux Containers) is an operating-system-level virtualization method for running multiple isolated Linux systems (containers) on a control host using a single Linux kernel.

and will allow us to run UNL within virtual environment keeping the main host system simple and clear (as the virtualization do).

In present days (December 2016), UNL supports the installation only on Ubuntu 14.04 x64. Any other linux distro and x86 are not supported. I’ve tried Ubuntu 16.10 and it really did not work.

Therefore as the first step we need to create and install required LXC container. I’m usually using online LXC templates. So type

lxc-create -n unetlab -t download

here the command will create the LXC container (virtual machine?) named unetlab directly from the internet. Then you need to choose Distribution (type ubuntu), then Release (type trusty) and finally architecture (type amd64).

Final output look like:

Using image from local cache Unpacking the rootfs --- You just created an Ubuntu container (release=trusty, arch=amd64, variant=default) To enable sshd, run: apt-get install openssh-server For security reason, container images ship without user accounts and without a root password. Use lxc-attach or chroot directly into the rootfs to set a root password or create user accounts.

and we may see that the container was created typing lxc-ls list cmd

PS ~ # lxc-ls -f NAME STATE AUTOSTART GROUPS IPV4 IPV6 TestujemLXC STOPPED 0 - - - temp STOPPED 0 - - - unetlab STOPPED 0 - - -

Now go to /var/lib/lxc (default home folder for all LXC containers) and typing ls you may see a folder with your LXC container:

S lxc # ls -al drwx------ 5 root root 4096 Dec 12 12:24 . drwxr-xr-x 87 root root 4096 Nov 22 12:04 .. ... drwxrwx--- 3 root root 4096 Dec 8 12:15 unetlab

vim /var/lib/lxc/unetlab/config

# def. gw lxc.network.ipv4.gateway = 192.168.10.1 # the ip address of the LXC container itself lxc.network.ipv4 = 192.168.10.111/24

lxc.network.link = bridge0

# Template used to create this container: /usr/share/lxc/templates/lxc-download # Parameters passed to the template: # For additional config options, please look at lxc.container.conf(5) # Uncomment the following line to support nesting containers: #lxc.include = /usr/share/lxc/config/nesting.conf # (Be aware this has security implications) # Distribution configuration lxc.include = /usr/share/lxc/config/ubuntu.common.conf lxc.arch = x86_64 # Container specific configuration lxc.rootfs = /var/lib/lxc/unetlab/rootfs lxc.rootfs.backend = dir lxc.utsname = unetlab # Network configuration lxc.network.type = veth lxc.network.link = bridge0 lxc.network.flags = up lxc.network.hwaddr = 00:16:3e:61:b1:24 lxc.network.ipv4.gateway = 192.168.10.1 lxc.network.ipv4 = 192.168.10.111/24

This configuration will assign the static ip address, however, the containers‘ system also ask for the dynamic one. We should correct it by manually edditing the main LXC container network config file. There are more ways to do that. However, the main system of the container is after creating totally minimalistic, without any editor or installed packages. Therefore the simplest one is the option, where we will manually edit mentioned file directly from the main host system (mint linux in my case). So open

vim /var/lib/lxc/unetlab/rootfs/etc/network/interfaces

/var/lib/lxc/unetlab/rootfs/

/etc/network/interfaces

Within the unetlab config just change from

iface eth0 inet dhcp

iface eth0 inet manual

Now we are able to start the container and access to it.

Start the container:

lxc-start -n unetlab

where -n define the the name of the container used in this example.

Now check if it run:

lxc-ls -f NAME STATE AUTOSTART GROUPS IPV4 IPV6 ... unetlab RUNNING 0 - 192.168.10.111 - ...

we may see, that it is running and it has static, just assigned IP address. And we are able to ping it from the main system:

ping 192.168.10.111 PING 192.168.10.111 (192.168.10.111) 56(84) bytes of data. 64 bytes from 192.168.10.111: icmp_seq=1 ttl=64 time=0.076 ms 64 bytes from 192.168.10.111: icmp_seq=2 ttl=64 time=0.033 ms ...

Starting work with the container

lxc-attach -n unetlab -- apt-get install PACAKGE_NAME

lxc-attach -n unetlab -- apt-get install openssh-server mc vim screen

lxc-attach -n unetlab

The command will attach the container console directly so we may see that the shell has changed, as we are in:

root@unetlab:/# pwd /

Now we are able to directly type

apt-get install PACKAGE_NAME

Installing UNetLab

Now we will install the UNL software. The UNL has guides (http://www.unetlab.com/documentation/index.html) for installing UNL on different platforms as for example virtualization technologies like Vmware Player/Workstation/ESX. But it also support the installation on physical server (baremetal HW). I follow this options. Ubuntu we already have prepared within our LXC container.

To do that access into the container (lxc-attach -n NAME) and check first if we have connectivity:

root@unetlab:/# ping 8.8.8.8 PING 8.8.8.8 (8.8.8.8) 56(84) bytes of data. 64 bytes from 8.8.8.8: icmp_seq=1 ttl=57 time=10.9 ms 64 bytes from 8.8.8.8: icmp_seq=2 ttl=57 time=10.7 ms ^C

apt-get install curl

curl -s http://www.unetlab.com/install.sh | bash

wait till it finish.

Now you just need to add some router images (tutorial for adding Cisco IOS http://www.unetlab.com/2014/11/adding-dynamips-images/#main ) and start creating topologies and learn networking!

Known issues

After some testing with running Cisco and Juniper I’m able to run just Cisco dynamips images, Junos has problem to start :-(.